What the MIT AI Study Means for Internal Communications

The MIT FutureTech AI study dropped in March 2026 and triggered the usual two reactions from internal communicators: panic or relief. Neither is right.

The study tracked AI performance across more than 17,000 real work tasks - not benchmarks, not coding puzzles, but actual jobs from the U.S. Department of Labor's O*NET database. And the category of work it focused on most heavily is cognitive, text-based, and communication-driven. That's not a coincidence for this audience. That's the job description.

The finding the headlines ran with: AI is advancing like a "rising tide," not a "crashing wave."

That's true. And it matters. But the more important half of that story, specifically for internal communicators, hasn't been written yet.

What the research found

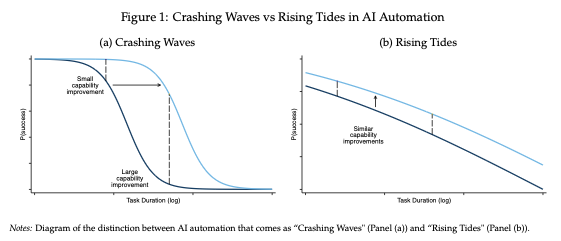

The study's central question is about the shape of AI progress. Does AI capability surge abruptly, suddenly conquering a narrow set of tasks it previously couldn't touch? Or does it improve more gradually, lifting performance broadly across many types of work at the same time?

The crashing wave scenario is the one that takes over the imagination. AI fails, fails, fails, then without warning, it's doing your job better than you. Workers are blindsided. Industries and companies reorganize and restructure overnight.

The rising tide scenario is different. Progress is steady, broad, and visible. No single occupation gets swamped without warning. The water just keeps climbing, slowly, everywhere at once.

MIT's data supports the rising tide view. Across text-based labor market tasks, AI performance improves at roughly the same rate regardless of whether a task takes 5 minutes or 8 hours for a human to complete. The relationship between task duration and AI success is surprisingly flat. Capability isn't surging dramatically at specific task types while leaving others untouched. It's rising broadly, across the board.

This distinction matters for how organizations and workers should respond. A crashing wave gives you almost no warning. A rising tide, even a fast one, is visible. You can see it coming. You can move.

The pace of that tide, though, is not slow. The study estimates that between mid-2024 and late 2025, AI went from completing tasks that take humans 3-4 hours at a 50% success rate, to completing tasks that take humans a full week at that same rate. Success rates across all task durations improved by 8-11 percentage points per year. That's not a trickle.

Why IC practitioners are standing in the water

Here's the part most coverage missed: this study is largely about you.

The researchers deliberately focused on text-based and partially text-based work, because that's what large language models address. They explicitly filtered out tasks where physical activity is the primary requirement. What they studied, heavily, is cognitive work that involves reading, writing, analyzing, synthesizing, planning and communicating.

That's internal communications.

The job families most relevant to what IC practitioners do - management, community and social service, educational instruction - all show meaningful AI capability right now, and all are improving at roughly the same rate as every other text-based domain. There's no exemption for "communications-adjacent" work. There's no asterisk that says "but strategic communications is safe."

The study's authors were candid about one important limitation: their current sample "qualitatively represents more white-collar occupations and under-represents blue-collar work." In other words, if your audience is frontline workers and you were hoping this research would tell you they're insulated from AI disruption, it can't tell you that. The data isn't there… yet.

What the data does tell you is that the work IC practitioners produce - the communications, the strategies, the plans, the messages - is precisely the category of work this study measured. And what it found should get your attention.

The problem hiding in "minimally sufficient"

Here's where the study gets interesting, and where most coverage stopped reading.

The benchmark the researchers used to define AI "success" is whether a hypothetical manager would accept the AI's output without edits, at a minimally sufficient quality level. On their 1-9 scoring scale, that's a 7. The definition: "Useful as is: Requires no edits to be minimally sufficient."

At that threshold, AI is already succeeding on roughly 60% of text-based tasks across all models in the survey. Frontier models perform considerably higher. And by 2029, the study projects that most text-based tasks will reach AI success rates of 80-95% at this threshold.

That sounds alarming. Until you look at what "minimally sufficient" actually means for communications work.

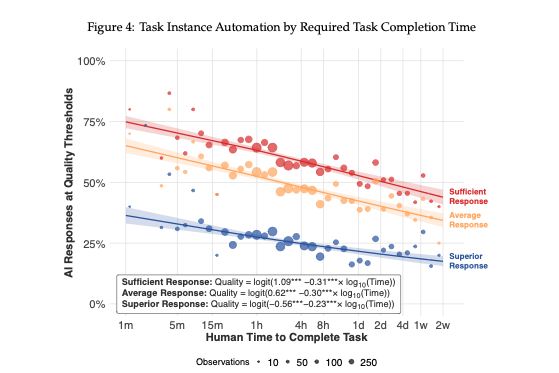

Look at Figure 4. The study plots three quality thresholds: sufficient (score of 7 or above), average (8 or above), and superior (9). Three different lines, with three very different trajectories.

The red line (sufficient quality) sits high. For short tasks, it hovers around 75-80%. Even for tasks that take humans a full day, it stays well above 50%. This is the "rising tide" line everyone's writing about.

The blue line (superior quality) tells a different story. For even the shortest tasks in the dataset, AI rarely clears 25% at the superior quality threshold. For longer tasks, it drops significantly further.

That gap between the red and blue lines is not a minor technical detail. It is the central fact that every IC practitioner needs to evaluate.

Internal communications isn't a field where "minimally sufficient" is good enough. You're writing messages that shape how employees understand major changes, trust their leadership, or feel seen by the organization during difficult moments. You're crafting communications that reach people at the beginning of a shift, on a factory floor, on a retail floor, without the luxury of a desk and twenty minutes of reading time. You're advising leaders on what to say and when. You're navigating the gap between what leadership wants to communicate and what employees actually need to hear.

A score of 7 on a 1-9 scale won't cut it for that work. And at the superior quality threshold, AI has a long way to go.

This is not an argument for complacency. The floor is rising. But where the floor is rising to, right now, is a place IC practitioners should already be well above.

Where the tide is headed

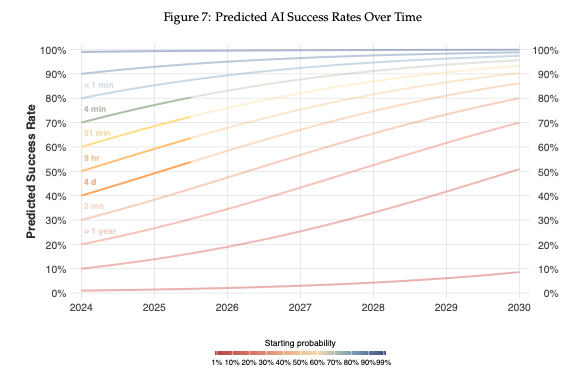

The study's projections through 2030 tell two stories simultaneously.

The curves climbing toward 80-95% by 2029 represent the sufficient quality threshold. That's a real number, and it represents real displacement of real work. Administrative communications, routine announcements, templated content, standard operating procedure documentation are tasks where "good enough without edits" is arguably the right bar. AI will handle a growing share of that work, and organizations will make decisions accordingly.

The curves that barely move in that same timeframe are the ones for superior quality. The study is clear that "achieving near-perfect performance will take considerably longer" and that this provides a window for worker adjustment, particularly in tasks with low tolerance for errors.

Internal communications has very low tolerance for errors. A poorly worded message about a benefits change doesn't just inconvenience people, it erodes trust. A tone-deaf announcement during a period of organizational anxiety can confirm employees' worst fears. The error cost in IC is high, and it's largely invisible until it's too late.

That's the window the researchers are describing. It's not infinite. But it's real.

The argument for moving up, not waiting

The practical implication for IC practitioners is to stop defending the work AI will do adequately and get sharper, faster, and more deliberate about the work it can't do well yet. Fewer templated messages and more communications built around real employee experience. Less time on production and more time on the strategic question of what employees need to hear, when, and from whom. More investment in the judgment, organizational knowledge, and trust relationships that sit entirely outside what any model can be prompted to produce.

The study's authors note something worth spending time on: task automation and worker impact are not the same thing. Automating individual tasks doesn't automatically harm the employees who held those tasks. It depends on how those tasks relate to the broader bundle of skills in an occupation, and what fills the space left behind. For IC, the tasks most susceptible to the rising tide are often the ones that were never the highest-value contribution anyway. The drafting. The formatting. The first pass.

What's less susceptible, at least for now, is the judgment layer. Knowing what employees are actually worried about. Understanding which leader has credibility and which doesn't. Sensing when a communications plan is technically correct but fundamentally tone-deaf. Reading the room across an entire organization.

That's still human work. And for comms practitioners who've been quietly doing it all along, the rising tide might actually create the conditions to finally get credit for it.

The water is coming up. Build on higher ground.

Written by Chuck Gose, founder of ICology.